Evaluate, optimize, and fit a classifier

Sign up to the DEA Sandbox to run this notebook interactively from a browser

Compatibility: Notebook currently compatible with the

DEA Sandboxenvironment

Background

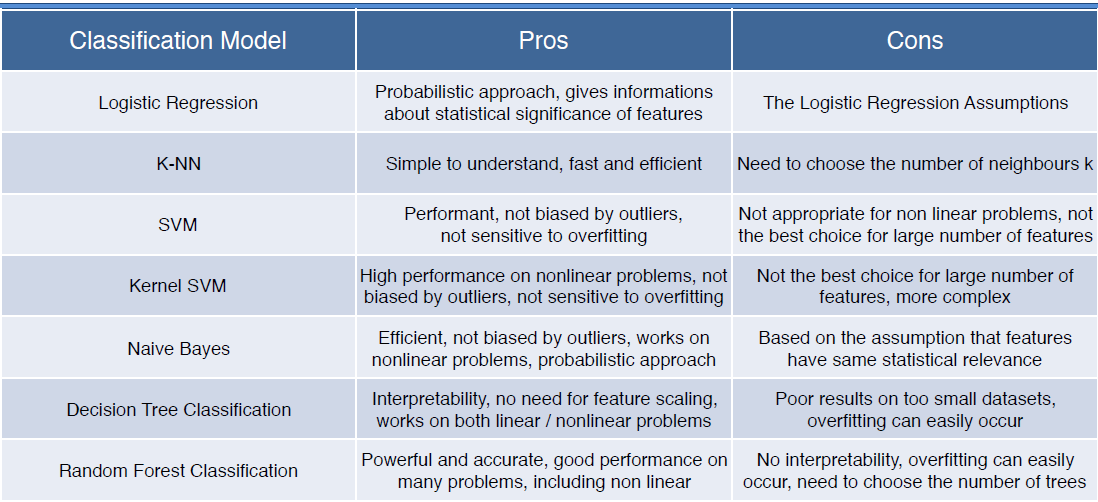

Now that we’ve extracted training data from the ODC, and inspected it to ensure the features we selected are appropriate and useful, we can train a machine learning model. The first step is to decide which machine learning model to use. Deciding which one to pick depends on the classification task at-hand. The table below provides a useful summary of the pros and cons of different models (all of which are available through scikit-Learn). This sckit-learn cheat sheet may also help.

Table 1: Some of the pros and cons of different classifiers available through scikit-learn

The approach to evaluating, optimizing, and training the supervised machine learning model demonstrated in this notebook has been developed to suit the default training dataset provided. The training dataset is small, contains geospatial data, and contains only two classes (crop and non-crop).

Because the dataset is relatively small (

n=430) as shown in the Extract_training_data notebook), splitting the data into a training and testing set, and only training the model on the smaller training set would likely substantially degrade the quality of the model. Thus we will fit the final model on all the training data.Because we are fitting the model on all the data, we won’t have a testing set left over to estimate the model’s prediction accuracy. We therefore rely on a method called nested k-fold cross-validation to estimate the prediction ability of our model. This method is described further in the markdown before the code.

And because we are generating a binary prediction (crop/non-crop), the metrics used to evaluate the classifier are those which are well suited to binary classifications.

While the approach described above works well for the default training data provided, it may not suit your own classification problem. It is advisable to research the different methods for evaluating and training a model to determine which approach is best for you.

Description

This notebook runs through evaluating, optimizing, and fitting a machine learning classifier (in the default example, a Random Forest model is used). Under each of the sub-headings you will find more information on how and why each method is used. The steps are as follows:

Demonstrate how to group the training data into spatial clusters to assist with Spatial K-fold Cross Validation (SKCV)

Calculate an unbiased performance estimate via nested cross-validation

Optimize the hyperparameters of the model

Fit a model to all the training data using the best hyperparameters identified in the previous step

Save the model to disk for use in the subsequent notebook,

4_Classify_satellite_data.ipynb

Getting started

To run this analysis, run all the cells in the notebook, starting with the “Load packages” cell.

Load packages

[1]:

# -- scikit-learn classifiers, uncomment the one of interest----

# from sklearn.svm import SVC

# from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

# from sklearn.naive_bayes import GaussianNB

# from sklearn.linear_model import LogisticRegression

# from sklearn.neighbors import KNeighborsClassifier

# import sys

import numpy as np

import pandas as pd

from joblib import dump

from pprint import pprint

import matplotlib.pyplot as plt

from sklearn.model_selection import GridSearchCV, ShuffleSplit, KFold

from sklearn.metrics import roc_curve, auc, balanced_accuracy_score, f1_score

Analysis Parameters

training_data: Name and location of the training data.txtfile output from runnning1_Extract_training_data.ipynbClassifier: This parameter refers to the scikit-learn classification model to use, first uncomment the classifier of interest in theLoad Packagessection and then enter the function name into this parametere.g. Classifier = SVCmetric: A single string that denotes the scorer used to find the best parameters for refitting the estimator to evaluate the predictions on the test set. See the scoring parameter page here for a pre-defined list of options. e.g.metric='balanced_accuracy'ncpus: The number of cores for parallelism of the model fitting

[2]:

training_data = "results/test_training_data.csv"

Classifier = RandomForestClassifier

metric = 'balanced_accuracy'

ncpus = 2

K-Fold Cross Validation Analysis Parameters

outer_cv_splits: The number of cross validation splits to use for the outer loop of the nested CV. These splits are used to estimate the accuracy of the classifier. A good default number is 3-5inner_cv_splits: The number of cross validation splits to use for the inner loop of the nested CV - the inner loop splits are used for optimizing the hyperparameters. A good default number is 3-5.test_size: This will determine what fraction of the dataset will be set aside as the testing dataset. There is a trade-off here between having a larger test set that will help us better determine the quality of our classifier, and leaving enough data to train the classifier. A good deafult is to set 10-20 % of your dataset aside for testing purposes.

[3]:

inner_cv_splits = 3

outer_cv_splits = 3

test_size = 0.20

Import training data

[4]:

df = pd.read_csv(training_data)

# Convert variable names into sci-kit learn nomenclature

X = df.drop('class', axis=1)

y = df['class']

Calculate an unbiased performance estimate via nested cross-validation

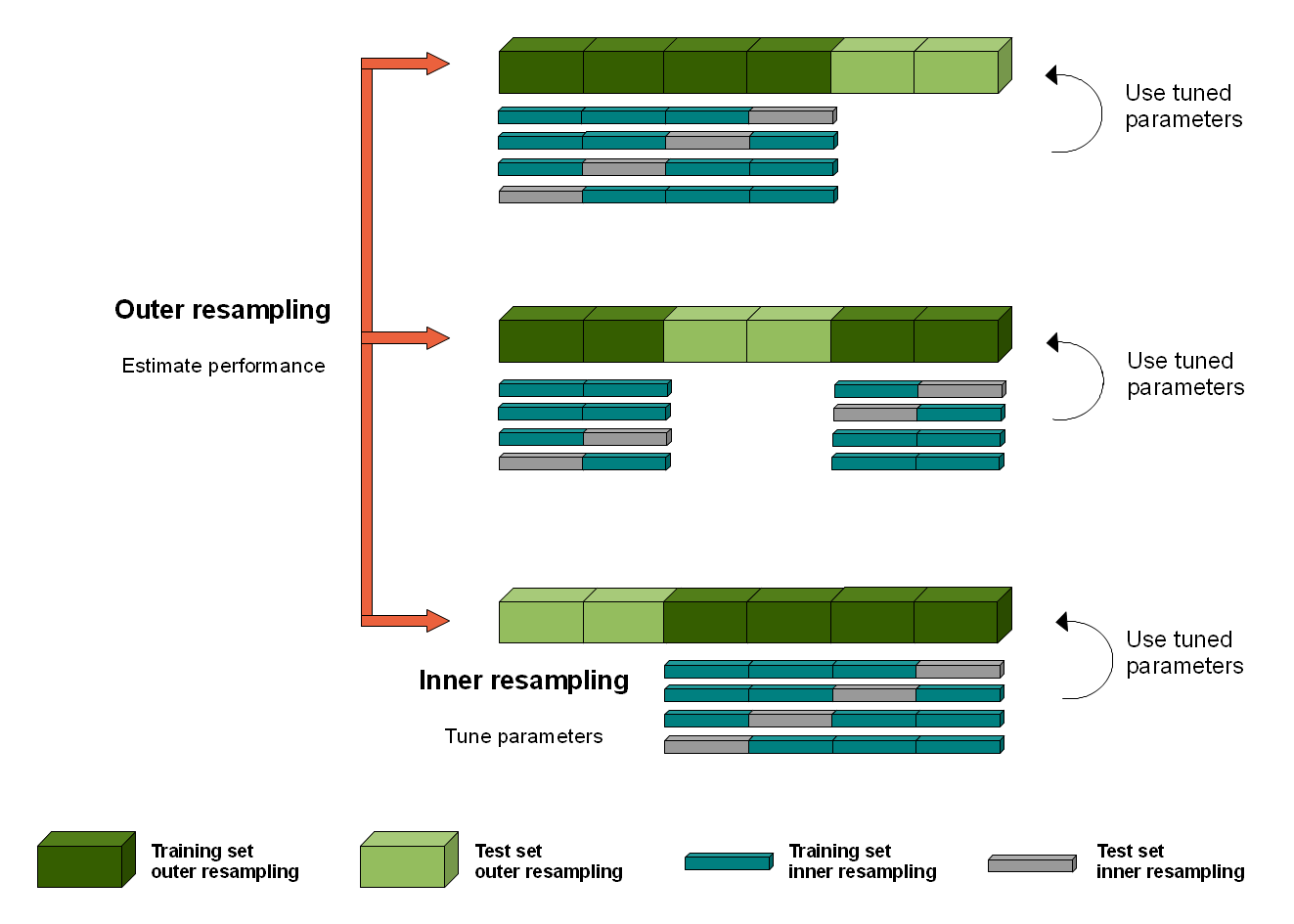

K-fold cross-validation is a statistical method used to estimate the performance of machine learning models when making predictions on data not used during training. It is a popular method because it is conceptually straightforward and because it generally results in a less biased or less optimistic estimate of the model skill than other methods, such as a simple train/test split.

This procedure can be used both when optimizing the hyperparameters of a model on a dataset, and when comparing and selecting a model for the dataset. However, when the same cross-validation procedure and dataset are used to both tune and select a model, it is likely to lead to an optimistically biased evaluation of the model performance.

One approach to overcoming this bias is to nest the hyperparameter optimization procedure under the model selection procedure. This is called nested cross-validation. The paper here provides more context to this issue. The image below depicts how the nested cross-validation works.

The result of our nested cross-validation will be a set of accuracy scores that show how well our classifier is doing at recognising unseen data points. The default example is set up to show the balanced_accuracy score, the f1 score, and the Receiver-Operating Curve, Area Under the Curve (ROC-AUC). This latter metric is a robust measure of a classifier’s prediction ability. This article has a good

explanation on ROC-AUC, which is a common machine learning metric.

All measures return a value between 0 and 1, with a value of 1 indicating a perfect score.

To conduct the nested cross-validation, we first need to define a grid of parameters to be used in the optimization: * param_grid: a dictionary of model specific parameters to search through during hyperparameter optimization.

Note: the parameters in the

param_gridobject depend on the classifier being used. The default example is set up for a Random Forest classifier, to adjust the parameters to suit a different classifier, look up the important parameters under the relevant sklearn documentation.

[5]:

# Create the parameter grid based on the results of random search

param_grid = {

'class_weight': ['balanced', None],

'max_features': ['log2', 'sqrt'],

'n_estimators': [100, 200, 300],

'criterion': ['gini', 'entropy']

}

[6]:

outer_cv = KFold(n_splits=outer_cv_splits, shuffle=True,

random_state=0)

# lists to store results of CV testing

acc = []

f1 = []

roc_auc = []

i = 1

for train_index, test_index in outer_cv.split(X, y):

print(f"Working on {i}/3 outer CV split", end='\r')

model = Classifier(random_state=1, n_jobs=1)

# Index training, testing, and coordinate data

X_tr, X_tt = X.iloc[train_index, :], X.iloc[test_index, :]

y_tr, y_tt = y.iloc[train_index], y.iloc[test_index]

# Inner split on data within outer split

inner_cv = KFold(n_splits=inner_cv_splits,

shuffle=True,

random_state=0)

clf = GridSearchCV(

estimator=model,

param_grid=param_grid,

scoring=metric,

n_jobs=ncpus,

refit=True,

cv=inner_cv.split(X_tr, y_tr),

)

clf.fit(X_tr, y_tr)

# Predict using the best model

best_model = clf.best_estimator_

pred = best_model.predict(X_tt)

# Evaluate model w/ multiple metrics

# ROC AUC

probs = best_model.predict_proba(X_tt)

probs = probs[:, 1]

fpr, tpr, thresholds = roc_curve(y_tt, probs)

auc_ = auc(fpr, tpr)

roc_auc.append(auc_)

# Overall accuracy

ac = balanced_accuracy_score(y_tt, pred)

acc.append(ac)

# F1 scores

f1_ = f1_score(y_tt, pred)

f1.append(f1_)

i += 1

Working on 3/3 outer CV split

[7]:

print("=== Nested K-Fold Cross-Validation Scores ===")

print("Mean balanced accuracy: "+ str(round(np.mean(acc), 2)))

print("Std balanced accuracy: "+ str(round(np.std(acc), 2)))

print('\n')

print("Mean F1: "+ str(round(np.mean(f1), 2)))

print("Std F1: "+ str(round(np.std(f1), 2)))

print('\n')

print("Mean roc_auc: "+ str(round(np.mean(roc_auc), 3)))

print("Std roc_auc: "+ str(round(np.std(roc_auc), 2)))

print('=============================================')

=== Nested K-Fold Cross-Validation Scores ===

Mean balanced accuracy: 0.97

Std balanced accuracy: 0.02

Mean F1: 0.98

Std F1: 0.02

Mean roc_auc: 0.999

Std roc_auc: 0.0

=============================================

These scores represent a robust estimate of the accuracy of our classifier based on the small amount of training data we have.

Also, its possible the map accuracy will differ from the accuracies reported here since training data is not always a perfect representation of the data in the real world. For example, we may have purposively over-sampled from hard-to-classify regions, or the proportions of classes in our dataset may not match the proportions in the real world. This point underscores the importance of conducting a rigorous and independent map validation, rather than relying on cross-validation scores.

Optimize hyperparameters

Machine learning models require certain ‘hyperparameters’: model parameters that can be tuned to increase the prediction ability of a model. Finding the best values for these parameters is a ‘hyperparameter search’ or an ‘hyperparameter optimization’.

To optimize the parameters in our model, we use GridSearchCV to exhaustively search through a set of parameters and determine the combination that will result in the highest accuracy based upon the accuracy metric defined.

We’ll search the same set of parameters that we definied earlier, param_grid.

[8]:

# Generate n_splits of train-test_split

rs = ShuffleSplit(n_splits=outer_cv_splits, test_size=test_size, random_state=0)

[9]:

# Instatiate a gridsearchCV

clf = GridSearchCV(Classifier(n_jobs=1),

param_grid,

scoring=metric,

verbose=1,

cv=rs.split(X, y),

n_jobs=ncpus)

clf.fit(X, y)

print('\n')

print("The most accurate combination of tested parameters is: ")

pprint(clf.best_params_)

print('\n')

print(f"The {metric} score using these parameters is: ")

print(round(clf.best_score_, 2))

Fitting 3 folds for each of 24 candidates, totalling 72 fits

The most accurate combination of tested parameters is:

{'class_weight': 'balanced',

'criterion': 'gini',

'max_features': 'log2',

'n_estimators': 100}

The balanced_accuracy score using these parameters is:

0.99

Fit a model

Using the best parameters from our hyperparmeter optimization search, we now fit our model on all the data.

[10]:

# Create a new model, use X.values for the fit so in prediction later we don't get warnings

new_model = Classifier(**clf.best_params_, random_state=1, n_jobs=ncpus)

new_model.fit(X.values, y)

[10]:

RandomForestClassifier(class_weight='balanced', max_features='log2', n_jobs=2,

random_state=1)In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook. On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.

Parameters

| n_estimators | 100 | |

| criterion | 'gini' | |

| max_depth | None | |

| min_samples_split | 2 | |

| min_samples_leaf | 1 | |

| min_weight_fraction_leaf | 0.0 | |

| max_features | 'log2' | |

| max_leaf_nodes | None | |

| min_impurity_decrease | 0.0 | |

| bootstrap | True | |

| oob_score | False | |

| n_jobs | 2 | |

| random_state | 1 | |

| verbose | 0 | |

| warm_start | False | |

| class_weight | 'balanced' | |

| ccp_alpha | 0.0 | |

| max_samples | None | |

| monotonic_cst | None |

Save the model

Running this cell will export the classifier as a binary.joblib file. This will allow for importing the model in the subsequent script, 4_Classify_satellite_data.ipynb

[11]:

dump(new_model, 'results/ml_model.joblib')

[11]:

['results/ml_model.joblib']

Recommended next steps

To continue working through the notebooks in this Scalable Machine Learning on the ODC workflow, go to the next notebook 4_Classify_satellite_data.ipynb.ipynb.

Evaluate, optimize, and fit a classifier (this notebook)

Additional information

License: The code in this notebook is licensed under the Apache License, Version 2.0. Digital Earth Australia data is licensed under the Creative Commons by Attribution 4.0 license.

Contact: If you need assistance, please post a question on the Open Data Cube Discord chat or on the GIS Stack Exchange using the open-data-cube tag (you can view previously asked questions here). If you would like to report an issue with this notebook, you can file one on

GitHub.

Last modified: Feb 2026

Tags

Tags: machine learning, SKCV, clustering, hyperparameters, Random Forest